Back إشراف على المحتوى Arabic Məzmun moderasiyası Azerbaijani Moderación de contenido Spanish Moderointi Finnish Modération d'informations French הגבלת תוכן HE Regulering van online inhoud Dutch System moderacji Polish Sistema de moderação Portuguese 审核系统 Chinese

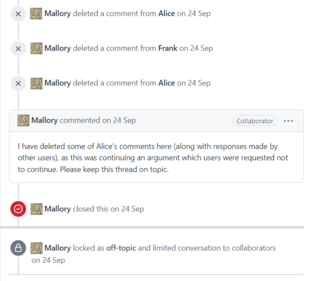

On Internet websites that invite users to post comments, content moderation is the process of detecting contributions that are irrelevant, obscene, illegal, harmful, or insulting, in contrast to useful or informative contributions, frequently for censorship or suppression of opposing viewpoints. The purpose of content moderation is to remove or apply a warning label to problematic content or allow users to block and filter content themselves.[1]

Various types of Internet sites permit user-generated content such as comments, including Internet forums, blogs, and news sites powered by scripts such as phpBB, a Wiki, or PHP-Nuke etc. Depending on the site's content and intended audience, the site's administrators will decide what kinds of user comments are appropriate, then delegate the responsibility of sifting through comments to lesser moderators. Most often, they will attempt to eliminate trolling, spamming, or flaming, although this varies widely from site to site.

Major platforms use a combination of algorithmic tools, user reporting and human review.[1] Social media sites may also employ content moderators to manually inspect or remove content flagged for hate speech or other objectionable content. Other content issues include revenge porn, graphic content, child abuse material and propaganda.[1] Some websites must also make their content hospitable to advertisements.[1]

In the United States, content moderation is governed by Section 230 of the Communications Decency Act, and has seen several cases concerning the issue make it to the United States Supreme Court, such as the current Moody v. NetChoice, LLC.

- ^ a b c d Grygiel, Jennifer; Brown, Nina (June 2019). "Are social media companies motivated to be good corporate citizens? Examination of the connection between corporate social responsibility and social media safety". Telecommunications Policy. 43 (5): 2, 3. doi:10.1016/j.telpol.2018.12.003. S2CID 158295433. Retrieved 25 May 2022.

© MMXXIII Rich X Search. We shall prevail. All rights reserved. Rich X Search